Release: discursus Core v0.0.2

discursus Core v0.0.2 is now available on Github.

Our areas of focus were:

Improve the architecture of the project to make it easier to spin up an instance of discursus Core, as well as improve overall stability and performance.

Add an articles_fct entity that will hold rich information about the articles which covers protest events.

We’re still a long way from having a stable code base that will let end users effectively explore and discover protest events as they stream in in almost real-time. But we’re getting there.

Please have a look at the release notes for full details.

Installation

Spinning up your own discursus instance still isn’t a breeze. But we know how important it is and are working on making our architecture and documentation more robust to that effect.

For now, to spin up an instance of discursus Core, you will first need to have your own external service accounts in place:

An AWS S3 bucket to hold the events, articles and enhancements.

An AWS ec2 instance to run discursus.

A Snowflake account to stage data from S3, perform transformations of data and expose entities.

On Snowflake, you will need to create a few objects prior to running your instance:

Source tables to stage the mined events.

Snowpipes to move data from S3 to your source tables.

File formats for Snowflake to read the source S3 csv files properly.

Once you have all those in place, you can fork the discursus Core repo.

Only thing left is to configure your instance:

Rename the Dockerfile_app.REPLACE file to Dockerfile_app.

Change the values of environment variables within the Dockerfile_app file.

Make any necessary changes to docker-compose

To run the Docker stack locally: docker compose -p "discursus-data-platform" --file docker-compose.yml up --build

Visit Dagster’s app: http://127.0.0.1:3000/

Architecture

As of version 0.0.2, this is what the architecture looks like.

To quote from our introduction post, the main components of that architecture are:

A miner that sources events from the GDELT project and saves it to AWS S3.

A dbt project that creates a data warehouse which exposes protest events.

A Dagster orchestrator that schedules the mining and transformation pipelines.

You might have noticed that there is also an enhancer component that was added to the architecture. This layer now includes a scripts that mines the metadata of the articles we are getting from GDELT.

But we have other plans for that layer, including introducing ML models to classify data points. More on that in the “What’s Ahead” section below.

Also, we changed where snowpipeswere being called from. It used to be that the transfer of data from S3 to Snowflake only happened whenever we were building up the data warehouse. As this is less frequent than the actual mining of data towards S3, the transfer could sometimes be quite large and the data warehouse was sometimes built without complete source data. Now data is being transferred to Snowflake after each mining task.

Finally, we try to keep up with latest releases of all libraries used in our stack, and that includes both dbt (upgraded to version 0.20) and Dagster (upgraded to version 0.12).

Exposing Media Coverage

Those architecture changes from above led us to being able to mine, enrich, transform and expose the articles which covers protest events.

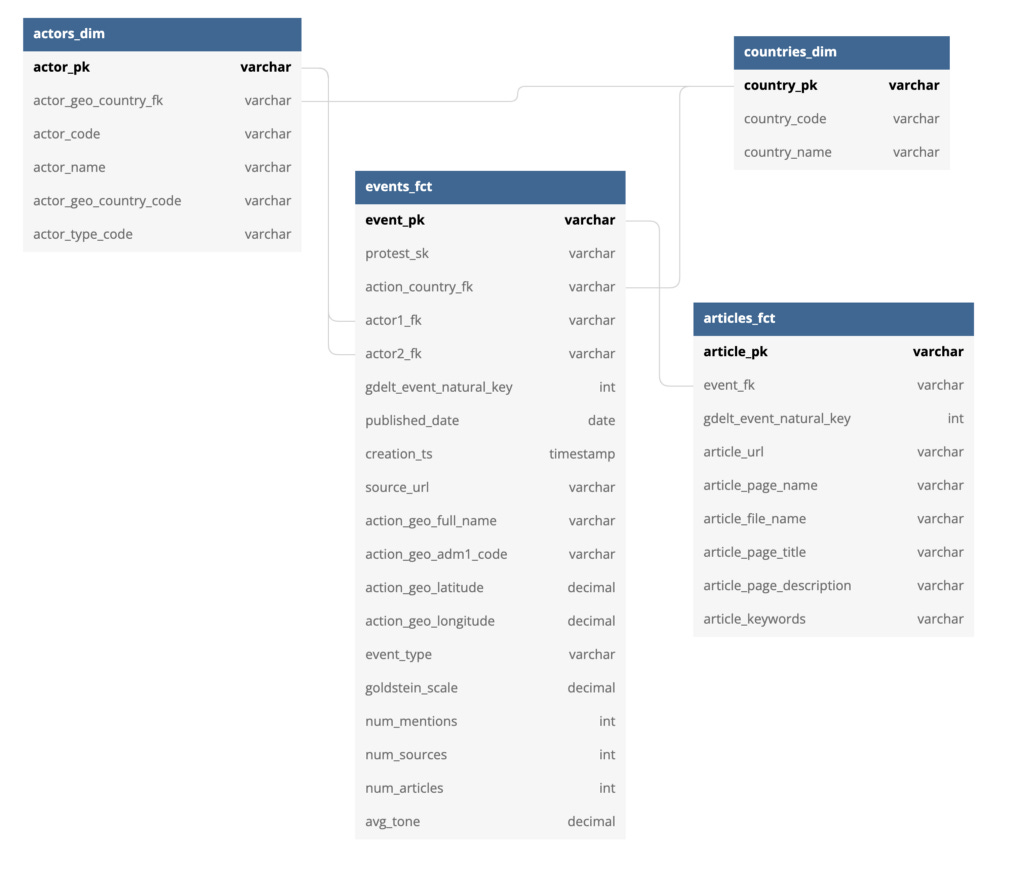

Here is version 0.0.2 of our entity relationship diagram (ERD).

And here is version 0.0.2 of our data warehouse transformation flow (DAG).

An articles_fcttable is useful to get more textual context to what an event is about. We will use that data to better filter out what are the actual protest events we want to let in our data platform. Plus we will eventually use that information to join events together, so we can start digging into all the events associated to a single protest movement.

What’s Ahead

As part of a collaboration with NovaceneAI, we will start adding ML enrichments to the project. This will become an additional external service (such as Snowflake, AWS ec2 and AWS s3) to an instance.

The initial goal is to improve how to classify the relevancy of protest events that are mined on GDELT. At the moment, we are using a bunch of arbitrary rules to only keep the relevant protest events, but even with such a tight net, we still have irrelevant protest events coming in. And after analysis, there were also relevant protest events that were filtered out.

Version 0.0.3 will mostly be about working with the NovaceneAI group to set up an instance that will allow us to train and deploy a model, which we’ll use to classify incoming GDELT events through their API.

Finally, we will also make 2 important upgrades to our library dependencies:

Moving dbt to version 0.21, which is the last release before version 1.

Moving Dagster to version 0.13, which has some very important conceptual changes in their API.

If this project is of interest, there are many ways you can contribute and help discursus core. Here a few ones:

Star this repo, subscribe to our blog and follow us on LinkedIn.

Fork this repo and run an instance yourself and please 🙏 help us out with documentation.

Take ownership of some of the issues we already documented, and send over some PRs

Create issues every time you feel something is missing or goes wrong.

All sort of contributions are welcome and extremely helpful 🙌